Tools

Tommy Geoco

Stochastic vs. Deterministic Design

In this post

001. Quick Hits

Happy Friday.

We just dropped our breakdown of "Agentic Design", an intentionally nerdy video essay for designers quietly building agent harnesses inside their workflows.

If you're not deep in this yet, stick around — the next video is the practical version.

Also: Detach tickets for our Config afterparty are live. Early-bird pricing is available, we always sell out so get in early. Hosting alongside Jesse Showalter, Ridd, and Ioana Teleanu.

Every year it's the event to be at if you’re going to Figma’s conference.

– Tommy (@designertom)

TOGETHER WITH FRAMER

Framer shipped twice this week on the same bet: the canvas is a real-time rendering surface, not a layout tool.

Tuesday, Framer’s CMS got a major redesign — more powerful, and the team called it "only the beginning." Wednesday, they shipped Logo Shaders — a native shader primitive for animating brand marks directly on the canvas. No plugin layer. No after-effects handoff. GPU-fidelity motion as a first-class object next to buttons and containers.

Most design tools treat shader work as something you export to. Framer treats it as something you compose in. That's a specific position — one I'll come back to below.

Stochastic vs. Deterministic Design

Something happened in design tooling over the last few weeks that will read, in six months, like the week the fork became clear.

Last week, we saw Anthropic make headlines when they released Claude Design. But here’s the pattern that showed up in the last 5 days.

Monday. Mason Warner at Twin posted: "Pre-built UI is dead. All software is moving to stochastic interfacing."

In plain English: the interface itself becomes a live render. No static file. No component library. Just a model emitting UI per user, per session. Call that stochastic design. Random by design — never the same twice.

Tuesday morning. Google open-sourced DESIGN.md — a declarative spec for design rules that agents can read and validate against WCAG. Design intent becomes agent input. Rule-based, taxonomic, enforceable. Call that scholastic design.

Tuesday afternoon. I recorded a podcast with George Hastings. George is a solo design engineer. Unicorn.Studio is his WebGL tool — shader-style scenes you compose by hand and export as finished artifacts. His position: the designer stays the final authority. One artifact at a time. Call that deterministic design — same inputs, same outputs, the file is the work.

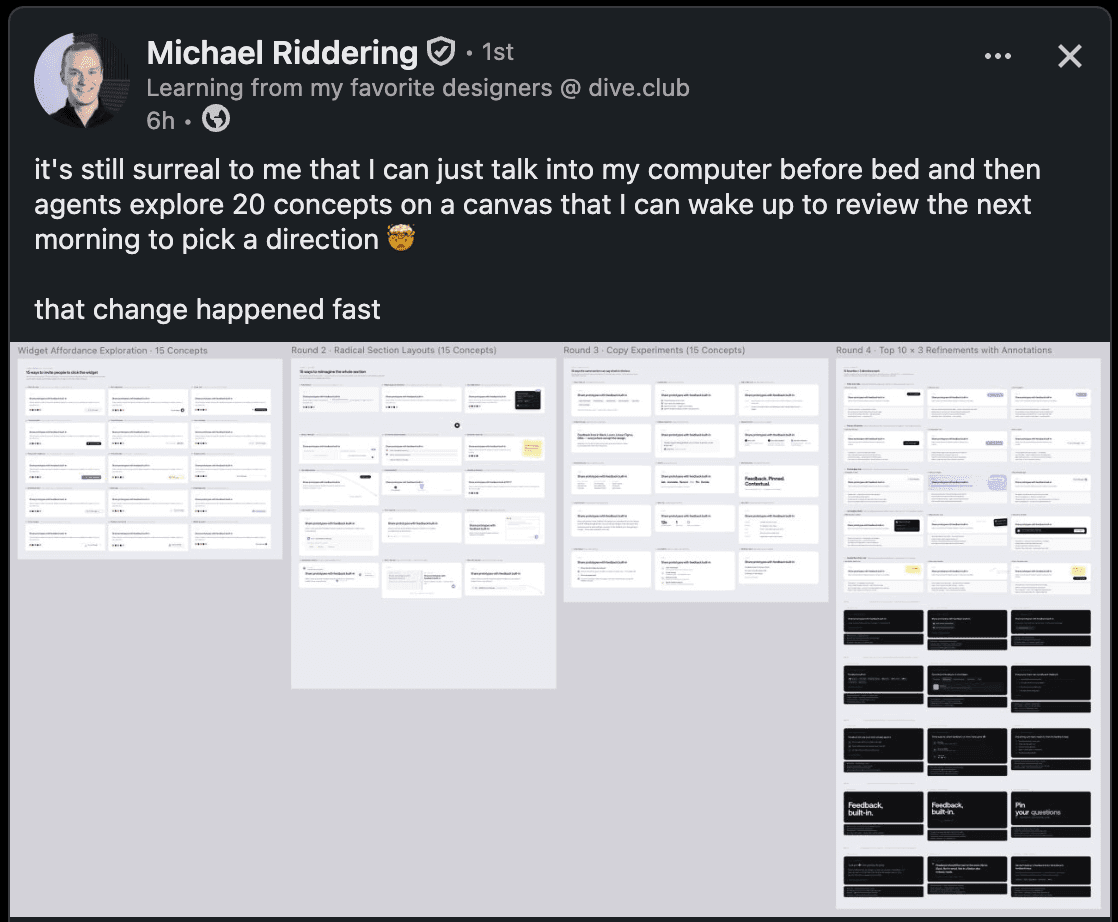

Wednesday. I sat down with Tom Krcha. Tom built Pencil.dev — an agent-first design canvas where files live in your git repo in an open, version-controlled format. SWARM mode runs multiple AI design agents in parallel, generating 20–30 screens while you sleep. Stochastic agents, deterministic output. The hybrid.

Thursday. MagicPatterns just dropped their Agent 2.0 complete with MCP, skills, and all sorts of connectors to support agentic design. This follows a clear trend of design software making room for agents as an ICP, not just a tool.

Multiple bets on the same thing, in the same week.

Stochastic — the UI is alive, rendered per user, per prompt. No file.

Scholastic — the rules are alive, agent-readable, enforceable in the pipeline. The file is a spec.

Deterministic — the designer is alive, in the canvas, making one thing at a time. The file is the work.

The "new design tools" you're currently choosing between aren't competing on feature surface anymore. They're competing on which bet about design labor they think wins.

Figma Make, Paper, MagicPath, Pencil, Stitch, Claude Design, Unicorn Studio, Magic Patterns — plot every one of them on this axis and it's the most honest map you'll get.

And for most teams, the answer is probably not any of the three. It's a stack.

Your design system lives in a

DESIGN.md-style file — scholastic.Your agents generate variance against it overnight — stochastic.

You, the designer, still sit in a canvas and make the final call on the things that matter — deterministic.

What I'd watch for: which big design tool names the frame first. Whoever does it owns the category. I'd also watch whether Figma starts talking about DESIGN.md-style rules publicly by end of Q2. If they do, they're playing on all three axes at once, which is the only defensible position from here.

I dive deeper into this in today’s video. Check it out.

Quick Hits

GPT-5.5 shipped — and the pitch is not "smarter chat." It's harness + agentic + computer use. 82.7% on Terminal-Bench, 58.6% on SWE-Bench Pro, matches 5.4 per-token latency while using fewer tokens. API held back while ChatGPT and Codex ship first.

GPT-Image-2 broke away — per Arena's text-to-image trend chart Monday, OpenAI's new image model jumped to 1,512, 242 points clear of Google's Nano Banana at #2. First real separation in the top tier in months. If your agent harness is leaning on image generation, this is a meaningful step, not a press release.

Magic Patterns Agent 2.0 — live on Product Hunt today. Multi-step generation with design-system awareness. Another tool declaring a position on the stochastic-inputs / deterministic-output hybrid. Same axis, different bet.

These are tools I actually use, so I asked them to sponsor the newsletter. They said yes. The best way to support us is to check them out 👇

Framer → How I build websites without code

Mobbin → How I find design patterns fast

MagicPath → How I design in canvas

Dscout → How I run user research

That's it for today.

Next week: harnesses, up close. Mine. What's in it, how it holds, where it breaks.

If you're already building one — or you're using Pencil, Paper, MagicPath, or Magic Patterns in real work — reply. I'm collecting receipts.

See you next week,

Tommy