Research

Tommy Geoco

How research teams are keeping up with build teams

In this post

001. The Mistmatch

001. The team that figured this out early

Happy Friday.

Check out the new State of Play episode with Anthropic’s Jenny Wen.

In January I wrote about discovery code and dropped this line: "The research cadence from 2023 doesn't match the build speed of 2026."

I've spent two months watching what happens when that stays true, and I’m trying to find out how more teams are navigating it.

Also: the UX Tools Prototyping Survey is live for Spring 2026. It’s short. If you create prototypes, I’d love your input. Take it here →

– Tommy (@designertom)

The Mistmatch

A designer with Claude Code can generate fifteen variations in the time it used to take to spec one. I just did this when we launched our Toolbenders network. It took me a single weekend to create and ship that project.

Building used to be slow enough that conversations happened naturally — in the gaps between specs, between handoffs, between deploys. Those gaps closed and the conversations didn't move anywhere.

Most teams I talk to are still running research the way they did two years ago. Quarterly studies. Two-week recruitment cycles. Synthesis decks that land after the feature shipped.

Nobody recalibrated.

Figma's 2026 State of the Designer report shows:

Ninety-eight percent of designers using AI increased usage in the past year.

Eighty-nine percent say they work faster.

Fifty-six percent of open roles are senior.

Twenty-five percent are junior.

It could be that the market is skewing towards people who know what to build.

Speed is becoming table stakes.

So: how do you actually match research cadence to build speed when build speed keeps accelerating?

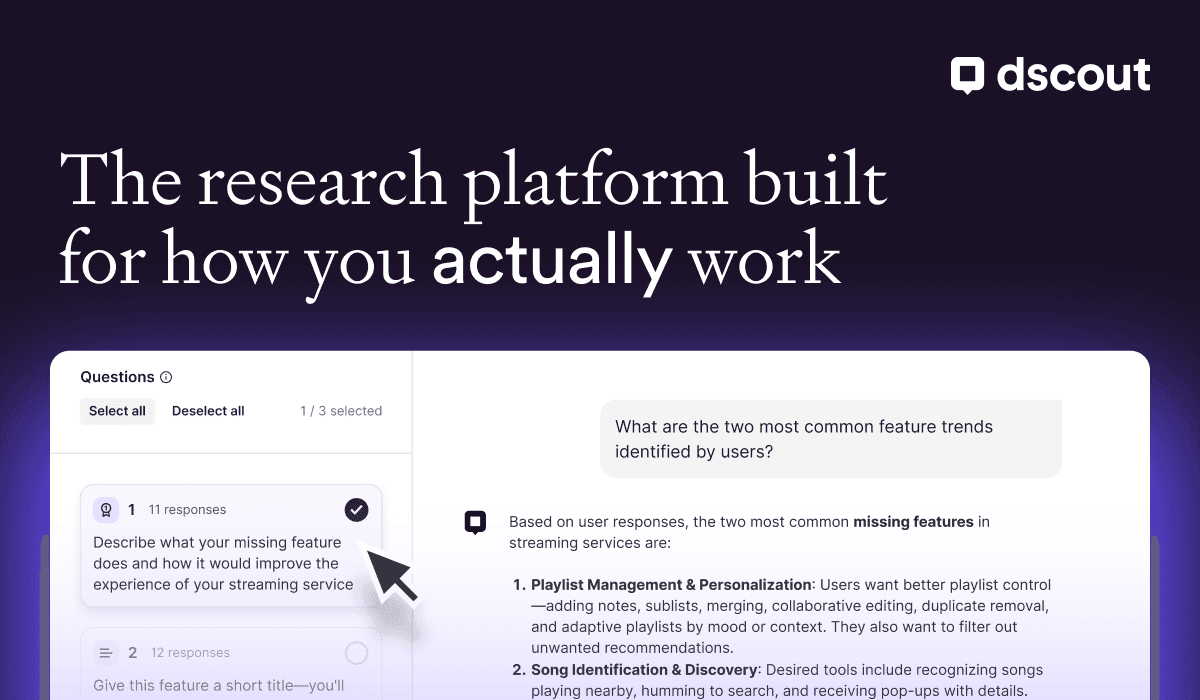

TOGETHER WITH DSCOUT

The bottleneck isn't running the research. It's everything around it — finding the right people, designing the study, getting to the insight before the sprint ends.

Dscout is an AI-powered research platform built for teams moving at this speed:

Recruit finds qualified Scouts in hours, not weeks — filtered by real behavior, not just demographics

AI Moderator runs unmoderated studies with smart follow-ups, so you get depth without the scheduling overhead

Dscout AI Study Creator helps you design and launch studies in a fraction of the time

The team that figured this out early

Brad Mattan, Senior UX Researcher at Vivid Seats.

Two researchers in a full product org. Features shipped faster than research could weigh in. It’s a common story, but what they did about it isn't.

Brad started running one or two customer conversations a week. Every week. Quick sessions timed to whatever just shipped.

Danny Zagorski, their UX manager, got designers moderating sessions. Brad built a ramp:

Note-taking. Line-by-line feedback until observations are sharp.

Translating notes into stakeholder-ready insights.

Running sessions solo.

Brad's colleague Evelyn built a screener through Dscout.

About 170 responses in a quarter. She would find someone who matches the current question, send the invite, and have it confirmed by lunch. Live-event attendees filtered by behavior, preferences, event type.

Most teams I talk to lose three days finding one right person, and by then the sprint moved.

Dscout's recruitment and panel tools gave Vivid Seats a pre-qualified pool they could pull from any time a question came up.

Marketing started sitting in. Watching real users react to campaigns.

One session with a visually impaired user went twenty minutes and cracked open accessibility conversations across three teams.

Developers saw users respond to things they'd shipped that same week.

Brad posted highlights in Slack and people who'd never been to a research session started asking when the next one was.

What I still don't know

I've been collecting examples of teams trying to match research speed to build speed. Weekly sessions + async testing. Designers building their own feedback loops.

Vivid Seats has the headcount and the customer base to make weekly continuous discovery work. A three-person startup doesn't have that infrastructure. A five-hundred-person company with compliance layers has a completely different set of walls.

I haven't found enough examples to map the shapes yet.

But the designers who have told me they feel hardest to replace right now have one thing in common: they've all heard a real person react to their work recently.

How that works at different scales — or whether it does — I don't know yet.

If you're running continuous discovery, or trying to, or stuck somewhere in the middle — I want to hear what you're doing. What cadence? What broke when you tried to speed it up?

Hit reply.

See you next week,

Tommy